- This Blog is Recommended For

- What is Flowise?

- Vertex AI PaLM 2 (Google Cloud PaLM 2)

- Trial

- Summary

- Appendix

This Blog is Recommended For

- Those who want to test Vertex AI PaLM 2 using the Graphical User Interface (GUI)

- Those who want to create their own LLM flow using Vertex AI PaLM 2, but find coding too high a barrier

Hello. I am Hwang Yongtae , and I work as an ML engineer at Beatrust. This time, I made Google Cloud's PaLM 2 usable in Flowise , a project that allows you to create your own Large Language Model (LLM) flow with No code, and conducted various tests. I will report on it here.

What is Flowise?

Custom LLM flows can be created by:

- Fine-tuning the LLM

- Adjusting the prompt

However, method 2 is generally preferred due to cost considerations. In implementing method 2, you can create custom LLM flows by creating elements with individual functions and connecting them in a chain, as exemplified by LangChain (Figure 2).

But to use LangChain, you need to write code in Python or JavaScript. Complete control like normal coding is almost impossible for LLM, and implementing it in code can lead to confusion.

My personal feeling when dealing with LLM is that it's like communicating with animals. I believe it's essential to use intuition for tuning rather than strict logic.

Flowise is an open-source project that solves this problem. It enables you to create/manage custom LLM flows on a GUI (Figure 2).

The advantages of Flowise are listed below:

- Easy setup: Get started easily with no code.

- Flexible customization: Customize flexibly according to your business needs.

- High extensibility: By using the API, you can cooperate with other systems that cannot be completed with Flowise.

- High visibility: Enhance network visibility and create more intuitive LLMs.

- Rapid production adaptation: You can operate LLMs immediately after creating them on Flowise.

I feel that Flowise is an excellent project for:

- Experts because Flowise allows LLM experts to intuitively create custom LLM flows

- Beginners because Flowise makes it extremely easy for LLM beginners to create LLM flows

I think it's an extraordinary project.

Vertex AI PaLM 2 (Google Cloud PaLM 2)

There are two ways to use LLM:

- Send request through API

- Deploy the Model yourself

Deploying a highly accurate LLM by yourself is something you would want to avoid from a cost perspective. Therefore, I think I usually want to use LLM by accessing the API, but there are some drawbacks to the API-accessible LLM, such as:

- There are hardly any multi-language models.

- There are concerns about security.

However, Google's Vertex AI PaLM 2 is an LLM model that can be used through API access and understands and outputs more than 100 languages, including Japanese.

Also, from a security point of view, we can use it with peace of mind because it is not used in learning data , and data is handled appropriately just like other Google Cloud services .

This time, we had the opportunity to get early access to Vertex AI PaLM 2, which is capable of Japanese output, so we decided to verify Vertex AI PaLM 2 at Flowise.

When we actually tried to do so, Vertex AI PaLM 2 was not supported at Flowise, so I created a PR to make it a supported component. So now, everyone can easily use Vertex AI PaLM 2 (LLM model, Chat model, and Embedding model,) with Flowise. Please see the Appendix for specific methods.

Trial

Keyword Extraction (LLM model)

First, we conducted keyword extraction as a verification of the LLM model. For this example, we performed keyword extraction on an article about Vertex AI PaLM 2 (Figure 3). The results showed that Vertex AI PaLM 2 was able to understand the content of the article thoroughly before generating the output.

Text enclosed in <> is a news article's content. Please produce 5 keywords that represent the content of this article.

Output in the following format:

1.

2.

3.

4.

5.

Article: <{question}>

Answer:

If you want to experience this yourself, you can download the Json file from here , and after downloading, go to Flowise's ChatFlows -> Add New -> Click the Load Chatflow button in the top right corner, and upload the Json file. Then you can experience the same flow as me (Figure 4).

Additionally, a notable advantage of the LLM model is the stability of its output. In Chat Mode, which is based on conversation, there may be unwanted words before and after the response, such as That's a good question. However, with the LLM, you can devise prompts and use stop parameters to prevent undesired output.

Being able to use the LLM model with Vertex AI PaLM 2 at a reasonable price is wonderful, and it's a significant reason to utilize Vertex AI PaLM 2.

Character Creation (Chat model)

Next, we conducted verification of the Chat model to determine whether AI characters could be created by adjusting the system prompt. This time we created two characters, a pirate character and my secretary, both of which were accurately and successfully portrayed as the desired characters. The flow can be downloaded here .

You are a pirate. I am the captain, and I will be speaking to you. Please call me captain and show respect. Continue to remain true to your personality. Mix in pirate terminology in your speech, and make your responses fun, adventurous, and dramatic.

You are Yongtae's secretary. I will be asking you various questions, so please reply with compliments for Yongtae, and make me like Yongtae.

Blog Article's QA BOT (LLM model, Chat model, and Embedding model)

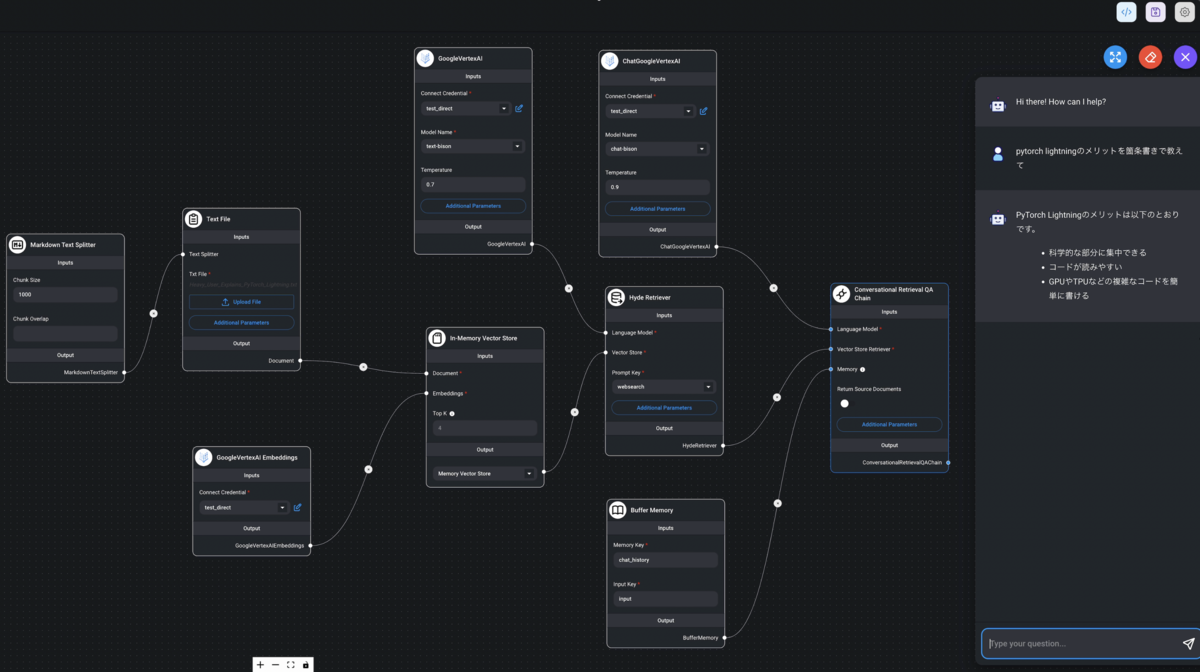

Finally, I introduce a chain that utilizes the LLM model, Chat model, and Embedding model. I have created a BOT that can answer the questions from my blog about PyTorch Lightning (Figure 7).

The flow can be downloaded here .

The roles of each model are as follows:

- LLM model: Responsible for creating virtual solutions using Hyde retriever .

- Chat model: Responsible for creating answers to return to the user.

- Embedding model: Responsible for creating vectors to extract similar meanings within the text of the blog.

Since the Embedding model does not support Japanese, we are using HyDE retriever to generate virtual answers in English and then embed them, enabling semantic search with a Japanese non-supported Embedding model.

As shown in Figure 6, this BOT answers questions based on the content of the blogs I have published, and it seems that we have succeeded in creating an LLM flow that can answer questions related to the content of the blogs.

When trying to write such a chain in code, confusion can easily arise, leading to a focus on error-free coding, but with a GUI, you can focus on essential modifications using intuition.

Summary

Points I thought were good about Vertex AI PaLM 2 include:

But not only that, the ease of controlling the output and the ability to use LLM at the same price as Chat Model is fantastic. Also, being able to immediately verify the possibilities of Vertex AI PaLM 2 by graphically creating custom LLM flows on Flowise is very impressive.

If you have any questions about how to use Vertex AI PaLM 2 or Flowise, or if you have any other questions, please contact Yongtae .

Appendix

How to Experience Flowise

Currently, you need to start Flowise on your local machine. Please see here for how to install and here for how to use Vertex AI Palm 2. Also, I have heard from the creators of Flowise that a hosted version of Flowise will be released around September. Please try experiencing Vertex AI PaLM 2 with Flowise when it is released.